NephMadness 2015: Critical Care Nephrology Region

Submit your picks! | For more on NephMadness 2015 | #NephMadness or #CCRegion on Twitter

The critical care region is packed with interesting story lines, SLED was a bubble team that not many people thought would make the tournament but they navigated their way through the selection process to face an experienced conventional renal replacement therapy (RRT) team. Will team SLED be able to outlast conventional RRT? Another new face is the furosemide stress test. They got hot at the end of the season and get to face the urinary indices, a team that just got taken to the woodshed in their conference tournament by NephroCheck. It’s hard to imagine they have anything left in the tank. It is always a compelling matchup when long time rivals like norepinephrine and vasopressin get paired up in a first round matchup. Norepinephrine has had the upper hand for a while, but maybe this is the year of vasopressin. But, the most interesting storyline is team MAP and team early goal directed therapy. Both of these teams have taken it on the chin in 2014 with devastating RCT results. We’re amazed they even made it to the tournament. This matchup might be a contest of who’s suffering less.

Selection Committee member for the Critical Care Nephrology Region:

Lakhmir S. Chawla, MD

Dr. Chawla is an Associate Professor of Medicine; he has dual appointments in the Department of Anesthesiology and Critical Care Medicine and in the Department of Medicine, Division of Renal Diseases and Hypertension at the George Washington University Medical Center. He currently serves as Chief of the Division of Intensive Care Medicine at the Washington DC Veterans Medical Center. Dr. Chawla is board certified in internal medicine, critical care medicine, and nephrology. He is an active investigator in the fields of acute kidney injury (AKI), particularly in the area of inflammation and AKI, AKI biomarkers, AKI risk prediction, AKI as a cause of CKD, and AKI therapeutics. In addition, he is active investigator in extracorporeal therapies for renal replacement therapy, albumin dialysis, and inflammation. Dr. Chawla is an Associate Editor for the Clinical Journal of the American Society of Nephrology.

Meet the Competitors for the Critical Care Nephrology Region

SLED in Sepsis

Conventional RRT in Sepsis

AKI Indices

Furosemide Stress Test in AKI

Norepinephrine in Sepsis

Vasopressin in Sepsis

Increased MAP in Sepsis

Early Goal Directed Therapy (EDGT)

SLED in Sepsis vs Conventional RRT in Sepsis

Some debates about which team is better can be settled on the court. Want to know if Duke or UNC is better? Good news: they play each other at least twice a year. But other discussions are unanswerable. For instance, is the 2015 University of Kentucky team better than the University of Kentucky team from 2012 (NCAA champions, most wins (38) in a season ever, and 4 first round NBA picks)? This is unanswerable, and the lack of head to head competition allows armchair fans to endlessly debate the question.

Some debates about which team is better can be settled on the court. Want to know if Duke or UNC is better? Good news: they play each other at least twice a year. But other discussions are unanswerable. For instance, is the 2015 University of Kentucky team better than the University of Kentucky team from 2012 (NCAA champions, most wins (38) in a season ever, and 4 first round NBA picks)? This is unanswerable, and the lack of head to head competition allows armchair fans to endlessly debate the question.

Nephrology has our own version of the data-less question, though intermittent hemodialysis has been compared to CRRT in numerous trials, there is no data on an head to head match up of IHD versus SLED. Let the debates begin!

In acute kidney injury there is a moment of clarification, the moment you decide the patient needs renal replacement therapy (RRT). Not only that, you need to decide while type of RRT to use. The delicate balance between keeping a patient dry for the heart/lungs and wet for kidney evaporates with the decision to take over the essential task of fluid and solute control with a machine rather than relying on the damaged kidneys. Biomechanical engineers have developed novel machines dedicated for ICU patients, CRRT machines, but often those machines or the personnel required to use them are unavailable. In those situations nephrologists are forced to adapt conventional dialysis machines to the unique needs and limitations of septic AKI patients. In those situations one can use conventional hemodialysis techniques or a specialized technique such as sustained low efficiency dialysis (SLED).

SLED in Sepsis

Also referred to as prolonged intermittent renal replacement therapy (PIRRT)–and sometimes derided as poor man’s CRRT–SLED is a hybrid form of dialysis that takes the best parts of intermittent hemodialysis and continuous RRT. Some of the goals of this modality are:

- Slower blood flow rates as compared to standard intermittent HD

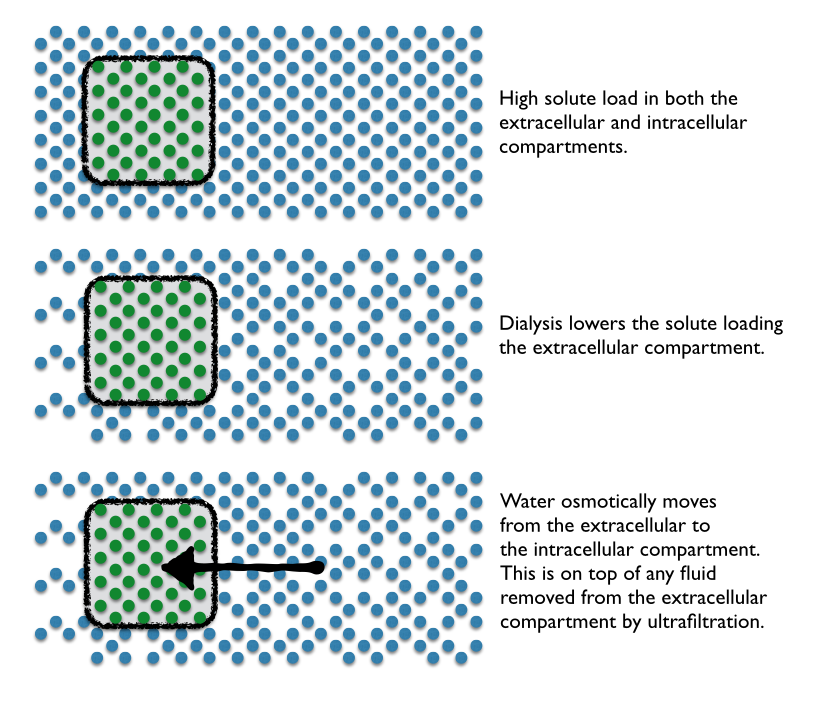

- Slow solute removal to prevent solute disequilibrium

- Slow ultrafiltration to provide hemodynamic stability

- Sustained treatment to maximize dialysis dose

- Intermittency for convenient access to patients for out-of-unit procedures and scheduled down-time.

One of the other advantages of SLED is that it leverages the existing chronic HD equipment and personnel. SLED is also fairly well tolerated in hemodynamically unstable patients. For example, MD Anderson uses a standard Fresenius 2008H K dialysis machine with the ubiquitous F16nr dialyzers. However, they program these machines completely unlike conventional HD. MD Anderson uses a blood flow of 200 mL/min and a dialysate flow of 100 mL/min. To avoid clotting they run an additional 100 mL per hour of normal saline pre-filter. Three quarters of this cohort was on vasopressors and seemed to tolerate the dialysis as evidenced by a trend toward decreasing vasopressor support over time and the ability to successfully remove fluid with the technique (average of 360 ml/h). The technique also provided good solute control with an 80% reduction in pre-treatment BUN and 73% reduction in pre-treatment creatinine by 48 hours.

Berbece and Richardson looked at cost and found daily SLED was about half the cost of CRRT ($1,431/week compared to $3,089 for CRRT with citrate and $2,607 with heparin). The same study also provided urea kinetics and found higher urea clearance with SLED (weekly Kt/V of 8.4 with SLED and 7.1 with CRRT). SLED was well tolerated with no hemodynamic instability in 86% of the treatments.

CRRT has two distinct advantages over SLED that keeps CRRT near and dear to the critical care nephrologist. Because CRRT is ‘continuous’, it allows for better volume control. Bouchard and colleagues have demonstrated that patients on CRRT are subject to less volume overload than intermittent HD. The other advantage is drug dosing. When SLED is deployed, the patient has two distinct periods of machine-induced clearance through the day: a period of excellent drug clearance with RRT is running, and then a clearance of zero when it is off. Because of this dichotomy, drug dosing is much more complicated and in order to get drug dosing right, many drugs need to be redosed immediately after SLED. When CRRT is running, drug dosing is much simpler–dose to the clearance that the machine is providing.

SLED has also been shown to be effective in lithium and salicylate toxicity.

Credit: Table reproduced from Chris Nickson, Life in the Fastlane / CC BY-SA 4.0.

Conventional RRT in Sepsis

Conventional intermittent HD has repeatedly been tested against the darling of critical care nephrologists, CRRT. However, despite going head to head in meta-analysis after meta-analysis, intermittent HDcontinues to hold its head up high. When CRRT and conventional intermittent HDwere compared as initial modality for RRT, there was no significant difference in mortality or renal recovery.

These studies might all be suffering from selection bias, because they typically exclude conventional intermittent HDfrom patients too unstable to tolerate the therapy.

Additionally a recent negative study showing that SLED combined with antimicrobial therapy failed to decrease the initial high plasma IL-6 concentrations noted in patients with sepsis, ie, high initial plasma IL-6 concentrations have been shown to directly correlated with in-hospital mortality. Though no data was presented on whether intermittent HD was able to lower IL-6.

Intermittent HD is like Northern Iowa. A throwback to an older style of college basketball, a time where coaches would recruit players to play for 4 years and mature in the program, a time before one-and-done stars. Intermittent HD has loads of theoretical reasons why it should not be effective and have inferior patient outcomes, but it just keeps plugging away, defying the predictions and matching continuous therapies outcome for outcome.

AKI Indices vs Furosemide Stress Test in AKI

In the excitement that surrounds biomarkers, no major biomarker has emerged as a true functional troponin of the kidney. Unfortunately though, without novel drugs or therapies nephrologists are left a bit like the mythic Cassandra, able to predict the coming AKI but with unable to alter the outcome.

In the excitement that surrounds biomarkers, no major biomarker has emerged as a true functional troponin of the kidney. Unfortunately though, without novel drugs or therapies nephrologists are left a bit like the mythic Cassandra, able to predict the coming AKI but with unable to alter the outcome.

The discussion of functional markers is important beyond the question of diagnosis, the other critical question nephrologists and intensivists are faced with in AKI is when to initiate renal replacement therapy. As Dr. Chawla wrote:

RRT is an invasive procedure with inherent risks, and one would not want to initiate this therapy if the patient were destined to recover renal function without intervention. However, a more conservative approach of initiating RRT late in the course of the AKI can subject the patient to adverse consequences.

So there is a need for a test that can do more than determine who will develop AKI but hint at the natural history that AKI will take in any individual patient. While biomarkers may play a role in this determination, NephMadness will be going old school to look at two functional markers and how they may help determine this, the traditional AKI indices, FENa, FEUrea, urine microscopy versus a new provocative test, the furosemide stress test.

AKI Indices

What physicians are more enamored with equations than nephrologists? The kidney has inspired equations for GFR, proteinuria, sodium correction, acid-balance, and just about any other renal metric you can put a number to. With all these equations and smart phones remembering both the equation and doing the math we are left with too many people with mathematical precision but lacking physiologic context. Even as long as 40 years ago nephrologists were stressing the importance of context in order to understand the equations and numbers that spill out of the chemistry laboratory. Kimmel et al look at how CKD confounds FENa evaluations and discusses other pitfalls in common nephrology equations. It is a good read.

The time-honored renal indices have fallen on hard times as their validity has been questioned. Recently, Pons et al looked at whether FENa, FEUrea, Urine/Plasma Creatinine, or Urine/ Plasma Urea could differentiate transient AKI (less than 3 days) versus persistent AKI (more than 3 days) among critically ill patients in the ICU. None of the four indices was much better than a coin toss in predicting the duration of AKI (area under the receiver-operating characteristic curve 0.50 to 0.59). A similar trial looking only at FEUrea confirmed these findings.

Interestingly, urine microscopy has been evaluated for its ability to predict AKI prognosis. Bellomo’s group looked at urine microscopy in septic and non-septic AKI and found more tubular cells and granular casts with sepsis. The higher urine microscopy scores also predicted worsening AKI with a specificity of 97% as well as need for RRT and death. Perazella found similar results in his cohort of 249 patients with AKI.

The traditional calculations of renal sodium handling and concentrating ability seems to provide little prognostic information but the urinalysis, essentially a liquid biopsy of the tubules, provides diagnostic and prognostic information. Maybe it is time for us to fire up the centrifuge instead of the iPhone apps during AKI consults.

Furosemide Stress Test in AKI

The furosemide stress test (FST) is a provocative test to separate out patients with AKI who are going to progress to higher AKI stages and possibly dialysis from those who will have a less severe course. The FST relies on the intuitive concept that responding to furosemide requires a patient to have a mixture of an adequate GFR, a functional proximal tubule (to move furosemide from the blood into the proximal tubule via organic anion transporter), and a functional thick ascending limb of the loop of Henle. Chawla et al hypothesize that “the kidney’s response or lack of response to a furosemide challenge, as a clinical assessment of tubular function, could identify patients with severe tubular injury before it was clinically apparent.”

In the first publication, Chawla created and validated a standardized approach to using furosemide to assess the likelihood of progressing to more advanced AKI. They gave AKI patients 1 mg/kg of furosemide IV, or 1.5 mg/kg if the patient had received loop diuretics in the previous 7 days. They replaced all urine output mL for mL with isotonic crystalloids to prevent hypovolemia. Retrospectively it was clear that patients that had progressive AKI had much poorer response to the FST right from the first hour through the 6 hours of observation but the biggest spread, the point of maximum differentiation, came at hour two. A urine output of over 200 mL in the second hour after the FST indicated a lack of progression, with an AUC of 0.87. Sensitivity was 97.1% and specificity was 84.1%. To make matter worse for FeNa, its AUC was 0.51, a hair above the threshold of being completely useless.

Koyner et al took a look at the same cohort but used frozen specimens to see if the addition of biomarkers added additional information. They found that the FST out performed NGAL, IL-18, KIM-1, IGFBP-7xTIMP-2 (the recently licensed nephrocheck), urine creatinine, and FENa. From the discussion:

Specifically, FST was significantly better than our complete panel of urinary biomarkers at predicting progression to AKIN stage 3. The addition of biomarkers to FST results did not provide any additional benefit. Similarly, FST outperformed all other biomarkers in predicting the end point of receipt of RRT and inpatient death.

The FST has also been used to determine if a patient can successfully discontinue CRRT.

We have all had an intuitive sense that the patient who doesn’t respond to diuretics is the person more likely to need dialysis and likely to do poorly. The difference between that foreboding and data is the standardized approach created by Chawla. Remember 1 mg/kg should provide 200 mL of urine in 1-2 hours.

Which one will move on to the next round? The urinary tests that predict AKI or the furosemide stress test–both common approaches that we perform on a daily basis. Tough decision here!!

Norepinephrine vs Vasopressin in Sepsis

Sepsis is characterized by increased cardiac output but even greater vasodilation such that patients are often hypotensive. Initial volumes on the order of 30 mL/kg are typically recommended. Following initial resuscitation, additional volume can be given and should be continued as long as additional fluid continues to improve the hemodynamic response. When the response fades and the patients are still hypotensive, they should be given vasopressors. When deciding on the vasopressor to choose the physician is confronted with a wide variety of choices: dopamine, epinephrine, norepinephrine, vasopressin, and phenylephrine are all on the menu. NephMadness is throwing two of these gladiators into the arena: norepinephrine and vasopressin. Which one is better for sepsis? How do they affect renal function?

Sepsis is characterized by increased cardiac output but even greater vasodilation such that patients are often hypotensive. Initial volumes on the order of 30 mL/kg are typically recommended. Following initial resuscitation, additional volume can be given and should be continued as long as additional fluid continues to improve the hemodynamic response. When the response fades and the patients are still hypotensive, they should be given vasopressors. When deciding on the vasopressor to choose the physician is confronted with a wide variety of choices: dopamine, epinephrine, norepinephrine, vasopressin, and phenylephrine are all on the menu. NephMadness is throwing two of these gladiators into the arena: norepinephrine and vasopressin. Which one is better for sepsis? How do they affect renal function?

Norepinephrine in Sepsis

Norepinephrine has emerged from the pack of vasopressors on the back of randomized controlled trials, meta-analysis, and international consensus guidelines. The Surviving Sepsis campaign lists norepinephrine as the first-line vasopressor with an evidence grade of 1B. From the rationale:

Norepinephrine increases MAP due to its vasoconstrictive effects, with little change in heart rate and less increase in stroke volume compared with dopamine. Norepinephrine is more potent than dopamine and may be more effective at reversing hypotension in patients with septic shock.

In head to head trials norepinephrine had lower short-term mortality (RR, 0.91) while dopamine had a higher rate of arrhythmias (RR, 2.34).

Nephrologists get skittish with this recommendation because intra-renal norepinephrine has been used in experimental models of ischemic AKI. Forgive us if we don’t want to give our patients the same poison we are using in the lab to induce renal failure. Consistent with this is that decreases in renal blood flow are seen in normal volunteers given norepinephrine.

However, in a detailed study that looked at norepinephrines effects on renal perfusion, resistance, oxygen supply and oxygen uptake in post-cardiac surgery patients with AKI (KDIGO grade 1) found surprising results:

- Renal blood flow remained stable across a range MAPs from 60-90 mm Hg

- Oxygen delivery was highest at a MAP of 75 (higher than at 60 or 90)

- Renal vascular resistance rose with increasing MAP

- GFR was highest at a MAP of 75 (higher than at 60 or 90) and did not go up as blood pressure climbed to a MAP of 90

- Renal oxygen consumption was highest at the low MAP of 60

- Urine flow increased linearly with MAP

The authors concluded that using norepinephrine to increase MAP to the generally recognized target of 75 improved rather than compromised renal perfusion and oxygenation. Further increase of the MAP to 90 mm Hg did not further affect these variables.

Norepinephrine seems to stand strong in sepsis whether you look at renal perfusion and oxygenation or patient survival and adverse events.

Vasopressin in Sepsis

Vasopressin is one of the endogenous hormones secreted in response to hypotension, and known to stimulate a family of receptors, namely AVPR1a, AVPR1b, AVPR2, oxytocin receptors, as well as purinergic receptors. It causes a catecholamine-independent arterial smooth muscle contraction.

Septic patients relationship with vasopressin is complex. Patients have a relative deficiency of circulating vasopressin, particularly in advanced stages, but vasopressin receptor levels are downregulated as are oxytocin receptors in the heart. This is important because oxytocin receptors cause vasodilation. The loss of oxytocin vasodilation may explain the increased cardiac mortality with vasopressin in some mouse models of ischemia/reperfusion injury.

Early in septic shock, vasopressin spikes, but endogenous vasopressin supplies quickly exhaust themselves, leaving the patients with low levels. Supporters of vasopressin in sepsis cite the following plausible mechanisms for its major role on the resuscitative management of such patients:

- There is a deficiency of vasopressin in septic shock

- Low-dose vasopressin infusion improves blood pressure

- Low-dose vasopressin decreases norepinephrine requirements

- Low-dose vasopressin improves renal function

The rubber met the road of vasopressin versus norepinephrine in the epic Vasopressin and Septic Shock Trial (VASST). This trial randomized pressor-dependent patients with septic shock to either vasopressin or norepinephrine. Outcome was 28-day mortality. The study was double blinded and the trial used fixed dose study drug but the nurses used additional open label vasopressors to keep MAP at 65-75. The investigators predicted 60% mortality in the norepinephrine group and powered the study to detect a 10% reduction in mortality with vasopressin. However the mortality rate was only 39%, vasopressin’s mortality was 35.4% (P=0.26). So the drug hit the predicted reduction in mortality of 10% but the unpredicted better outcomes of the patients resulted in an underpowered study. Adverse events were similarly balanced between the groups, though there was a trend toward a higher rate of cardiac arrest with norepinephrine (2.1% vs 0.8%, P = 0.14) balanced against a trend toward higher rate of digital ischemia with vasopressin (2.0% vs 0.5%, P=0.11).

Though the investigators suspected that vasopressin would provide more protection in more severe sepsis, they actually found a lower mortality in the patients with less severe sepsis (as defined by lower norepinephrine infusion rates at baseline). The authors cautioned that these were secondary outcomes and were not adjusted for multiple comparisons.

Interestingly, a post hoc analysis of the VASST study that looked at patients with AKI (RIFLE Criteria risk) suggested that septic patients treated with vasopressin had decreased risk of progression to more advanced stages of AKI and a trend to decreased mortality. However, the authors cautioned that perhaps the observed beneficial effects of vasopressin may have been actually secondary to a decreased exposure to norepinephrine.

At this time, vasopressin still seems to be a drug on the precipice. VASST was its chance to shine but due to the excellent care and improved sepsis survival, it was unable to meet expectations. The most recent meta-analysis continues to show what the authors of the Surviving Sepsis campaign concluded: good enough for second line; not yet ready for prime time.

Increased MAP vs Early Goal Directed Therapy in Sepsis

This is a contest between two ideas in critical care that have failed after high-profile randomized controlled trials. One, increased MAP targets, was trying to muscle out the long-time standard of care MAP of 65 mm Hg, while the other, Early Goal Directed Therapy (EGDT), has been a staple of sepsis resuscitation for over a decade.

This is a contest between two ideas in critical care that have failed after high-profile randomized controlled trials. One, increased MAP targets, was trying to muscle out the long-time standard of care MAP of 65 mm Hg, while the other, Early Goal Directed Therapy (EGDT), has been a staple of sepsis resuscitation for over a decade.

Increased MAP in Sepsis

It has been a time-honored dictum that “a higher MAP is better than a lower MAP.” This is supported by observational studies showing lower MAP in patients who develop AKI and increased need for RRT when the MAP was below 75 mm Hg. This data is bolstered by a small interventional study that demonstrated improved urine output when the MAP was increased from 65 to 75, but without further improvements at 85. Likewise, it has been shown that prolonged hypotension (MAP < 60 mm Hg) is associated with increased mortality.

Target MAP of > 65 mm Hg has been used by most studies based on the premise that lactate clearance was diminished when targeting lower MAPs. However, the upper MAP threshold has remained controversial. It has been shown that maintaining an MAP > 70 mm Hg at the expense of increased vasopressor dose and duration coincided with increased mortality.

In 2002, the European Society of Intensive Care Medicine and the Society of Critical Care Medicine partnered to form the Surviving Sepsis Campaign (SSC). Their stated goal was to investigate physicians’ views on sepsis focusing on current definitions, routes to diagnosis, and treatment options. In 2012, SSC published the International Guidelines for Management of Severe Sepsis and Septic Shock was published. In the section on hemodynamic support and adjunctive therapy, the guidelines made a grade 1C recommendation to target an MAP 65 mm Hg as an initial goal, though they advise practitioners to consider a higher goal for patients with a history of hypertension or atherosclerosis. Additionally they advise that frequent assessment of end-organ perfusion such as urine output, lactate, mental status, and skin perfusion supplement the blood pressure data.

In 2014, the multi-center, open-label trial SEPSISPAM supported these recommendations. 776 patients with septic shock were randomized to either a high-target MAP of 80-85 mm Hg or a low-target MAP of 65-70 mm Hg. 28-day and 90-day mortality was the same in both groups. The study also supports the secondary recommendation to individualize MAP targets, as there was less need for RRT among patients with a history of hypertension who were randomized to the higher blood pressure target.

Early Goal Directed Therapy

Occasionally there are advances in medicine that make a clean break before and after. In sepsis that occurred with the publication of Rivers’ Early Goal-directed therapy (EGDT). Rivers’ study was published just a handful of months after activated protein C made a splash with PROWESS. But while PROWESS was a phenomenally expensive treatment with significant associated risk of bleeding to provide 20% improvement in survival, EGDT promised a 35% reduction in mortality by providing a logical, intuitive, systematic, approach to improving perfusion in sepsis. Rivers’ approach was hailed as a breakthrough and put in place as the standard of care worldwide.

The Rivers study was a single-center RCT with 263 patients with severe sepsis (2 of 4 SIRS criteria and either a lactate > 4 mmol/L or SBP <90 after fluid resuscitation). They randomized 263 patients to either protocol-based therapy or standard therapy. The protocol focused on three goals to improve perfusion:

- Increase the central venous pressure to 8-12 mm Hg. The protocol used IV colloids or crystalloids to achieve that.

- Get and regulate the MAP to between 65 and 90 mm Hg. The protocol used vasopressors and vasodilators to achieve that.

- Get the mixed venous oxygen saturation over 70%. The protocol used transfusions and inotropic agents to achieve that.

The main concept underlying EGDT is that generalized tissue hypoxia precedes overt hypotension, and early recognition of this situation allows one to optimize oxygen delivery to tissues. By utilizing an organized approach to certain hemodynamic parameters (CVP, MAP, SCVO2), one can avert the complications that may arise from tissue underperfusion. This straightforward protocol became the mantra of sepsis care for a decade. The 2012 International Guidelines for Management of Severe Sepsis and Septic Shock gave EGDT a 1C recommendation. This adoption of protocolized care led to significant improvements in morbidity and mortality rates (10-12% decrease in mortality nationally). Adherence to EGDT has translated to a 20% decrease in hospitalization-related costs and decreases in length of hospitalization by 4-5 days.

But all good things must come to an end…

In 2014, PRoCESS (Protocolized Care of Early Septic Shock Trial) was published. This 5-year, 31-center, trial randomized 1,241 patients to one of three resuscitation strategies for early septic shock:

- Protocol-based EGDT: 439 patients

- Protocol-based Standard Therapy (DID NOT require a CVC, inotropic drugs, or blood transfusions): 446 patients

- Usual Care: 456 patients

The primary outcome was in-hospital death from any cause at 60 days and no difference among the three protocols could be detected. Interestingly, the authors used an estimate of 30-45% mortality for their power calculation based on the Rivers study but the 21st century has been kind to sepsis patients and they found only 20% mortality. The authors concluded that “protocol-based resuscitation of patients in whom septic shock was diagnosed in the emergency department did not improve outcomes.” Of note, the incidence of AKI was higher in the Protocol-based Standard Therapy (6% vs 3% in the other groups).

A second study from Australia/New Zealand, also published in 2014, comes to much the same conclusions. The ARISE study was done in 51 emergency departments. They randomized 1,600 patients to EGDT or usual care. They also found no difference between therapies in the background of dramatically lower mortality from sepsis.

Did EGDT only offer advantages in a world that was not as good at treating sepsis as it is now? Was EGDT an important step in getting people familiar with the goals and tools for treating sepsis such that after a decade and a half we no longer need a protocol, we do it by nature? Or was EGDT just a small single-center study subject to bias and inflated effect size?

When all is said done, we can conclude that EGDT per se is not better than good old-fashioned bedside titration of care, but there are some real advances that we have taken from the original EGDT study that we must acknowledge.

- First, elevated lactate levels often allow us to ‘reveal’ shock early in the course of the disease and lactate clearance is an excellent marker for improved outcomes.

- Second, none of the new trials compared ‘late’ therapy to ‘early’ therapy, they only compared protocols on how early therapy is conducted.

- All emergency departments and ICUs now recognize that ‘shock’ is a medical emergency, and that prompt resuscitation is mandatory.

This situation was not always the case – many a patient sat in the ED after getting their 1 liter of saline awaiting an ICU bed prior to the publication of the original EGDT study. There is no going back, early is better and although this should have been obvious, we can thank the EGDT investigators for making this abundantly clear.

– Post written and edited by Drs. Joel Topf, Edgar Lerma, and Lakhmir Chawla.

Some of the topics are nicely touched by the European guidelines – with a more balanced view:

A European Renal Best Practice (ERBP) position statement on the Kidney Disease Improving Global Outcomes (KDIGO) Clinical Practice Guidelines on Acute Kidney Injury: Part 1: definitions, conservative management and contrast-induced nephropathy†

The ad-hoc working group of ERBP:, Danilo Fliser1, Maurice Laville2, Adrian Covic3, Denis Fouque4, Raymond Vanholder5, Laurent Juillard2 and Wim Van Biesen5 ⇓NDT doi: 10.1093/ndt/gfs375

For a complete European perspective – see also Nephrol Dial Transplant. 2013 Dec;28(12):2940-5. doi: 10.1093/ndt/gft297. Epub 2013 Oct 11.

A European Renal Best Practice (ERBP) position statement on the Kidney Disease Improving Global Outcomes (KDIGO) Clinical Practice Guidelines on Acute Kidney Injury: part 2: renal replacement therapy.

Thank you Adrian. Appreciate you comments on this important topic.

It’s nice to have a rapid review of practically interesting points